Remove ads

NTSC (from National Television System Committee) is the first American standard for analog television, published and adopted in 1941.[1] In 1961, it was assigned the designation System M. It is also known as EIA standard 170.[2]

In 1953, a second NTSC standard was adopted,[3] which allowed for color television broadcast compatible with the existing stock of black-and-white receivers.[4][5][6] It is one of three major color formats for analog television, the others being PAL and SECAM. NTSC color is usually associated with the System M; this combination is sometimes called NTSC II.[7][8] The only other broadcast television system to use NTSC color was the System J. Brazil used System M with PAL color. Vietnam, Cambodia and Laos used System M with SECAM color - Vietnam later started using PAL in the early 1990s.

The NTSC/System M standard was used in most of the Americas (except Argentina, Brazil, Paraguay, and Uruguay), Myanmar, South Korea, Taiwan, Philippines, Japan, and some Pacific Islands nations and territories (see map).

Since the introduction of digital sources (ex: DVD) the term NTSC has been used to refer to digital formats with number of active lines between 480 and 487 having 30 or 29.97 frames per second rate, serving as a digital shorthand to System M. The so-called NTSC-Film standard has a digital standard resolution of 720 × 480 pixel for DVD-Videos, 480 × 480 pixel for Super Video CDs (SVCD, Aspect Ratio: 4:3) and 352 × 240 pixel for Video CDs (VCD).[9] The digital video (DV) camcorder format that is equivalent to NTSC is 720 × 480 pixels.[10] The digital television (DTV) equivalent is 704 × 480 pixels.[10]

Remove ads

The National Television System Committee was established in 1940 by the United States Federal Communications Commission (FCC) to resolve the conflicts between companies over the introduction of a nationwide analog television system in the United States. In March 1941, the committee issued a technical standard for black-and-white television that built upon a 1936 recommendation made by the Radio Manufacturers Association (RMA). Technical advancements of the vestigial side band technique allowed for the opportunity to increase the image resolution. The NTSC selected 525 scan lines as a compromise between RCA's 441-scan line standard (already being used by RCA's NBC TV network) and Philco's and DuMont's desire to increase the number of scan lines to between 605 and 800.[11] The standard recommended a frame rate of 30 frames (images) per second, consisting of two interlaced fields per frame at 262.5 lines per field and 60 fields per second. Other standards in the final recommendation were an aspect ratio of 4:3, and frequency modulation (FM) for the sound signal (which was quite new at the time).

In January 1950, the committee was reconstituted to standardize color television. The FCC had briefly approved a 405-line field-sequential color television standard in October 1950, which was developed by CBS.[12] The CBS system was incompatible with existing black-and-white receivers. It used a rotating color wheel, reduced the number of scan lines from 525 to 405, and increased the field rate from 60 to 144, but had an effective frame rate of only 24 frames per second. Legal action by rival RCA kept commercial use of the system off the air until June 1951, and regular broadcasts only lasted a few months before manufacture of all color television sets was banned by the Office of Defense Mobilization in October, ostensibly due to the Korean War.[13][14][15][16] A variant of the CBS system was later used by NASA to broadcast pictures of astronauts from space.[citation needed] CBS rescinded its system in March 1953,[17] and the FCC replaced it on December 17, 1953, with the NTSC color standard, which was cooperatively developed by several companies, including RCA and Philco.[18]

In December 1953, the FCC unanimously approved what is now called the NTSC color television standard (later defined as RS-170a). The compatible color standard retained full backward compatibility with then-existing black-and-white television sets. Color information was added to the black-and-white image by introducing a color subcarrier of precisely 315/88 MHz (usually described as 3.579545 MHz±10 Hz).[19] The precise frequency was chosen so that horizontal line-rate modulation components of the chrominance signal fall exactly in between the horizontal line-rate modulation components of the luminance signal, such that the chrominance signal could easily be filtered out of the luminance signal on new television sets, and that it would be minimally visible in existing televisions. Due to limitations of frequency divider circuits at the time the color standard was promulgated, the color subcarrier frequency was constructed as composite frequency assembled from small integers, in this case 5×7×9/(8×11) MHz.[20] The horizontal line rate was reduced to approximately 15,734 lines per second (3.579545×2/455 MHz = 9/572 MHz) from 15,750 lines per second, and the frame rate was reduced to 30/1.001 ≈ 29.970 frames per second (the horizontal line rate divided by 525 lines/frame) from 30 frames per second. These changes amounted to 0.1 percent and were readily tolerated by then-existing television receivers.[21][22]

The first publicly announced network television broadcast of a program using the NTSC "compatible color" system was an episode of NBC's Kukla, Fran and Ollie on August 30, 1953, although it was viewable in color only at the network's headquarters.[23] The first nationwide viewing of NTSC color came on the following January 1 with the coast-to-coast broadcast of the Tournament of Roses Parade, viewable on prototype color receivers at special presentations across the country. The first color NTSC television camera was the RCA TK-40, used for experimental broadcasts in 1953; an improved version, the TK-40A, introduced in March 1954, was the first commercially available color television camera. Later that year, the improved TK-41 became the standard camera used throughout much of the 1960s.

The NTSC standard has been adopted by other countries, including some in the Americas and Japan.

Remove ads

With the advent of digital television, analog broadcasts were largely phased out. Most US NTSC broadcasters were required by the FCC to shut down their analog transmitters by February 17, 2009, however this was later moved to June 12, 2009. Low-power stations, Class A stations and translators were required to shut down by 2015, although an FCC extension allowed some of those stations operating on Channel 6 to operate until July 13, 2021.[24] The remaining Canadian analog TV transmitters, in markets not subject to the mandatory transition in 2011, were scheduled to be shut down by January 14, 2022, under a schedule published by Innovation, Science and Economic Development Canada in 2017; however the scheduled transition dates have already passed for several stations listed that continue to broadcast in analog (e.g. CFJC-TV Kamloops, which has not yet transitioned to digital, is listed as having been required to transition by November 20, 2020).[25]

Most countries using the NTSC standard, as well as those using other analog television standards, have switched to, or are in process of switching to, newer digital television standards, with there being at least four different standards in use around the world. North America, parts of Central America, and South Korea are adopting or have adopted the ATSC standards, while other countries, such as Japan, are adopting or have adopted other standards instead of ATSC. After nearly 70 years, the majority of over-the-air NTSC transmissions in the United States ceased on June 12, 2009,[26] and by August 31, 2011,[27] in Canada and most other NTSC markets.[28] The majority of NTSC transmissions ended in Japan on July 24, 2011, with the Japanese prefectures of Iwate, Miyagi, and Fukushima ending the next year.[27] After a pilot program in 2013, most full-power analog stations in Mexico left the air on ten dates in 2015, with some 500 low-power and repeater stations allowed to remain in analog until the end of 2016. Digital broadcasting allows higher-resolution television, but digital standard definition television continues to use the frame rate and number of lines of resolution established by the analog NTSC standard.

Remove ads

Resolution and refresh rate

NTSC color encoding is used with the System M television signal, which consists of 30⁄1.001 (approximately 29.97) interlaced frames of video per second. Each frame is composed of two fields, each consisting of 262.5 scan lines, for a total of 525 scan lines. The visible raster is made up of 486 scan lines. The later digital standard, Rec. 601, only uses 480 of these lines for visible raster. The remainder (the vertical blanking interval) allow for vertical synchronization and retrace. This blanking interval was originally designed to simply blank the electron beam of the receiver's CRT to allow for the simple analog circuits and slow vertical retrace of early TV receivers. However, some of these lines may now contain other data such as closed captioning and vertical interval timecode (VITC). In the complete raster (disregarding half lines due to interlacing) the even-numbered scan lines (every other line that would be even if counted in the video signal, e.g. {2, 4, 6, ..., 524}) are drawn in the first field, and the odd-numbered (every other line that would be odd if counted in the video signal, e.g. {1, 3, 5, ..., 525}) are drawn in the second field, to yield a flicker-free image at the field refresh frequency of 60⁄1.001 Hz (approximately 59.94 Hz). For comparison, 625 lines (576 visible) systems, usually used with PAL-B/G and SECAM color, and so have a higher vertical resolution, but a lower temporal resolution of 25 frames or 50 fields per second.

The NTSC field refresh frequency in the black-and-white system originally exactly matched the nominal 60 Hz frequency of alternating current power used in the United States. Matching the field refresh rate to the power source avoided intermodulation (also called beating), which produces rolling bars on the screen. Synchronization of the refresh rate to the power incidentally helped kinescope cameras record early live television broadcasts, as it was very simple to synchronize a film camera to capture one frame of video on each film frame by using the alternating current frequency to set the speed of the synchronous AC motor-drive camera. This, as mentioned, is how the NTSC field refresh frequency worked in the original black-and-white system; when color was added to the system, however, the refresh frequency was shifted slightly downward by 0.1%, to approximately 59.94 Hz, to eliminate stationary dot patterns in the difference frequency between the sound and color carriers (as explained below in § Color encoding). By the time the frame rate changed to accommodate color, it was nearly as easy to trigger the camera shutter from the video signal itself.

The actual figure of 525 lines was chosen as a consequence of the limitations of the vacuum-tube-based technologies of the day. In early TV systems, a master voltage-controlled oscillator was run at twice the horizontal line frequency, and this frequency was divided down by the number of lines used (in this case 525) to give the field frequency (60 Hz in this case). This frequency was then compared with the 60 Hz power-line frequency and any discrepancy corrected by adjusting the frequency of the master oscillator. For interlaced scanning, an odd number of lines per frame was required in order to make the vertical retrace distance identical for the odd and even fields,[clarification needed] which meant the master oscillator frequency had to be divided down by an odd number. At the time, the only practical method of frequency division was the use of a chain of vacuum tube multivibrators, the overall division ratio being the mathematical product of the division ratios of the chain. Since all the factors of an odd number also have to be odd numbers, it follows that all the dividers in the chain also had to divide by odd numbers, and these had to be relatively small due to the problems of thermal drift with vacuum tube devices. The closest practical sequence to 500 that meets these criteria was 3×5×5×7=525. (For the same reason, 625-line PAL-B/G and SECAM uses 5×5×5×5, the old British 405-line system used 3×3×3×3×5, the French 819-line system used 3×3×7×13 etc.)

Colorimetry

Colorimetry refers to the specific colorimetric characteristics of the system and its components, including the specific primary colors used, the camera, the display, etc. Over its history, NTSC color had two distinctly defined colorimetries, shown on the accompanying chromaticity diagram as NTSC 1953 and SMPTE C. Manufacturers introduced a number of variations for technical, economic, marketing, and other reasons.[29]

| Color space | White point | CCT | Primary colors (CIE 1931 xy) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| x | y | K | Rx | Ry | Gx | Gy | Bx | By | |

| NTSC (1953) | 0.31 | 0.316 | 6774 (C) | 0.67 | 0.33 | 0.21 | 0.71 | 0.14 | 0.08 |

| SMPTE C | 0.3127 | 0.329 | 6500 (D65) | 0.63 | 0.34 | 0.31 | 0.595 | 0.155 | 0.07 |

NTSC 1953

The original 1953 color NTSC specification, still part of the United States Code of Federal Regulations, defined the colorimetric values of the system as shown in the above table.[30]

Early color television receivers, such as the RCA CT-100, were faithful to this specification (which was based on prevailing motion picture standards), having a larger gamut than most of today's monitors. Their low-efficiency phosphors (notably in the Red) were weak and long-persistent, leaving trails after moving objects. Starting in the late 1950s, picture tube phosphors would sacrifice saturation for increased brightness; this deviation from the standard at both the receiver and broadcaster was the source of considerable color variation.

SMPTE C

To ensure more uniform color reproduction, some manufacturers incorporated color correction circuits into sets, that converted the received signal—encoded for the colorimetric values listed above—adjusting for the actual phosphor characteristics used within the monitor. Since such color correction can not be performed accurately on the nonlinear gamma corrected signals transmitted, the adjustment can only be approximated, introducing both hue and luminance errors for highly saturated colors.

Similarly at the broadcaster stage, in 1968–69 the Conrac Corp., working with RCA, defined a set of controlled phosphors for use in broadcast color picture video monitors.[31] This specification survives today as the SMPTE C phosphor specification:[32]

As with home receivers, it was further recommended[33] that studio monitors incorporate similar color correction circuits so that broadcasters would transmit pictures encoded for the original 1953 colorimetric values, in accordance with FCC standards.

In 1987, the Society of Motion Picture and Television Engineers (SMPTE) Committee on Television Technology, Working Group on Studio Monitor Colorimetry, adopted the SMPTE C (Conrac) phosphors for general use in Recommended Practice 145,[34] prompting many manufacturers to modify their camera designs to directly encode for SMPTE C colorimetry without color correction,[35] as approved in SMPTE standard 170M, "Composite Analog Video Signal – NTSC for Studio Applications" (1994). As a consequence, the ATSC digital television standard states that for 480i signals, SMPTE C colorimetry should be assumed unless colorimetric data is included in the transport stream.[36]

Japanese NTSC never changed primaries and whitepoint to SMPTE C, continuing to use the 1953 NTSC primaries and whitepoint.[33] Both the PAL and SECAM systems used the original 1953 NTSC colorimetry as well until 1970;[33] unlike NTSC, however, the European Broadcasting Union (EBU) rejected color correction in receivers and studio monitors that year and instead explicitly called for all equipment to directly encode signals for the "EBU" colorimetric values.[37]

Color compatibility issues

In reference to the gamuts shown on the CIE chromaticity diagram (above), the variations between the different colorimetries can result in significant visual differences. To adjust for proper viewing requires gamut mapping via LUTs or additional color grading. SMPTE Recommended Practice RP 167-1995 refers to such an automatic correction as an "NTSC corrective display matrix."[38] For instance, material prepared for 1953 NTSC may look desaturated when displayed on SMPTE C or ATSC/BT.709 displays, and may also exhibit noticeable hue shifts. On the other hand, SMPTE C materials may appear slightly more saturated on BT.709/sRGB displays, or significantly more saturated on P3 displays, if the appropriate gamut mapping is not performed.

Color encoding

This section needs additional citations for verification. (February 2024) |

NTSC uses a luminance-chrominance encoding system, incorporating concepts invented in 1938 by Georges Valensi. Using a separate luminance signal maintained backward compatibility with black-and-white television sets in use at the time; only color sets would recognize the chroma signal, which was essentially ignored by black and white sets.

The red, green, and blue primary color signals are weighted and summed into a single luma signal, designated (Y prime)[39] which takes the place of the original monochrome signal. The color difference information is encoded into the chrominance signal, which carries only the color information. This allows black-and-white receivers to display NTSC color signals by simply ignoring the chrominance signal. Some black-and-white TVs sold in the U.S. after the introduction of color broadcasting in 1953 were designed to filter chroma out, but the early B&W sets did not do this and chrominance could be seen as a crawling dot pattern in areas of the picture that held saturated colors.[40]

To derive the separate signals containing only color information, the difference is determined between each color primary and the summed luma. Thus the red difference signal is and the blue difference signal is . These difference signals are then used to derive two new color signals known as (in-phase) and (in quadrature) in a process called QAM. The color space is rotated relative to the difference signal color space, such that orange-blue color information (which the human eye is most sensitive to) is transmitted on the signal at 1.3 MHz bandwidth, while the signal encodes purple-green color information at 0.4 MHz bandwidth; this allows the chrominance signal to use less overall bandwidth without noticeable color degradation. The two signals each amplitude modulate[41] 3.58 MHz carriers which are 90 degrees out of phase with each other[42] and the result added together but with the carriers themselves being suppressed.[43][41] The result can be viewed as a single sine wave with varying phase relative to a reference carrier and with varying amplitude. The varying phase represents the instantaneous color hue captured by a TV camera, and the amplitude represents the instantaneous color saturation. The 3.579545 MHz subcarrier is then added to the Luminance to form the composite color signal[41] which modulates the video signal carrier. 3.58 MHz is often stated as an abbreviation instead of 3.579545 MHz.[44]

For a color TV to recover hue information from the color subcarrier, it must have a zero-phase reference to replace the previously suppressed carrier. The NTSC signal includes a short sample of this reference signal, known as the colorburst, located on the back porch of each horizontal synchronization pulse. The color burst consists of a minimum of eight cycles of the unmodulated (pure original) color subcarrier. The TV receiver has a local oscillator, which is synchronized with these color bursts to create a reference signal. Combining this reference phase signal with the chrominance signal allows the recovery of the and signals, which in conjunction with the signal, is reconstructed to the individual signals, that are then sent to the CRT to form the image.

In CRT televisions, the NTSC signal is turned into three color signals: red, green, and blue, each controlling an electron gun that is designed to excite only the corresponding red, green, or blue phosphor dots. TV sets with digital circuitry use sampling techniques to process the signals but the result is the same. For both analog and digital sets processing an analog NTSC signal, the original three color signals are transmitted using three discrete signals (Y, I and Q) and then recovered as three separate colors (R, G, and B) and presented as a color image.

When a transmitter broadcasts an NTSC signal, it amplitude-modulates a radio-frequency carrier with the NTSC signal just described, while it frequency-modulates a carrier 4.5 MHz higher with the audio signal. If non-linear distortion happens to the broadcast signal, the 3.579545 MHz color carrier may beat with the sound carrier to produce a dot pattern on the screen. To make the resulting pattern less noticeable, designers adjusted the original 15,750 Hz scanline rate down by a factor of 1.001 (0.1%) to match the audio carrier frequency divided by the factor 286, resulting in a field rate of approximately 59.94 Hz. This adjustment ensures that the difference between the sound carrier and the color subcarrier (the most problematic intermodulation product of the two carriers) is an odd multiple of half the line rate, which is the necessary condition for the dots on successive lines to be opposite in phase, making them least noticeable.

The 59.94 rate is derived from the following calculations. Designers chose to make the chrominance subcarrier frequency an n + 0.5 multiple of the line frequency to minimize interference between the luminance signal and the chrominance signal. (Another way this is often stated is that the color subcarrier frequency is an odd multiple of half the line frequency.) They then chose to make the audio subcarrier frequency an integer multiple of the line frequency to minimize visible (intermodulation) interference between the audio signal and the chrominance signal. The original black-and-white standard, with its 15,750 Hz line frequency and 4.5 MHz audio subcarrier, does not meet these requirements, so designers had to either raise the audio subcarrier frequency or lower the line frequency. Raising the audio subcarrier frequency would prevent existing (black and white) receivers from properly tuning in the audio signal. Lowering the line frequency is comparatively innocuous, because the horizontal and vertical synchronization information in the NTSC signal allows a receiver to tolerate a substantial amount of variation in the line frequency. So the engineers chose the line frequency to be changed for the color standard. In the black-and-white standard, the ratio of audio subcarrier frequency to line frequency is 4.5 MHz⁄15,750 Hz = 285.71. In the color standard, this becomes rounded to the integer 286, which means the color standard's line rate is 4.5 MHz⁄286 ≈ 15,734 Hz. Maintaining the same number of scan lines per field (and frame), the lower line rate must yield a lower field rate. Dividing 4500000⁄286 lines per second by 262.5 lines per field gives approximately 59.94 fields per second.

Transmission modulation method

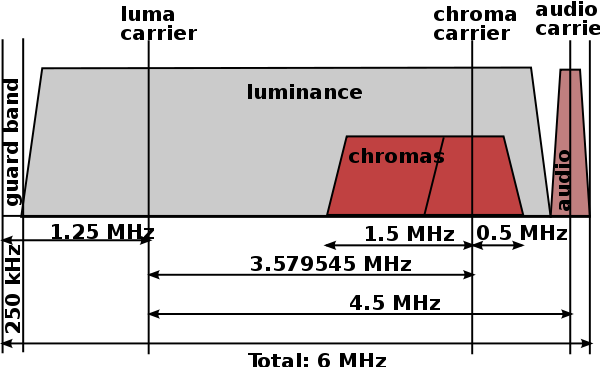

An NTSC television channel as transmitted occupies a total bandwidth of 6 MHz. The actual video signal, which is amplitude-modulated, is transmitted between 500 kHz and 5.45 MHz above the lower bound of the channel. The video carrier is 1.25 MHz above the lower bound of the channel. Like most AM signals, the video carrier generates two sidebands, one above the carrier and one below. The sidebands are each 4.2 MHz wide. The entire upper sideband is transmitted, but only 1.25 MHz of the lower sideband, known as a vestigial sideband, is transmitted. The color subcarrier, as noted above, is 3.579545 MHz above the video carrier, and is quadrature-amplitude-modulated with a suppressed carrier. The audio signal is frequency-modulated, like the audio signals broadcast by FM radio stations in the 88–108 MHz band, but with a 25 kHz maximum frequency deviation, as opposed to 75 kHz as is used on the FM band, making analog television audio signals sound quieter than FM radio signals as received on a wideband receiver. The main audio carrier is 4.5 MHz above the video carrier, making it 250 kHz below the top of the channel. Sometimes a channel may contain an MTS signal, which offers more than one audio signal by adding one or two subcarriers on the audio signal, each synchronized to a multiple of the line frequency. This is normally the case when stereo audio and/or second audio program signals are used. The same extensions are used in ATSC, where the ATSC digital carrier is broadcast at 0.31 MHz above the lower bound of the channel.

"Setup" is a 54 mV (7.5 IRE) voltage offset between the "black" and "blanking" levels. It is unique to NTSC. CVBS stands for Color, Video, Blanking, and Sync.

The following table shows the values for the basic RGB colors, encoded in NTSC[45]

| Color | Luminance level (IRE) | Chrominance level (IRE) | Chrominance amplitude (IRE) | Phase (º) |

|---|---|---|---|---|

| White | 100.0 | 0.0 | 0.0 | – |

| Yellow | 89.5 | 48.1 to 130.8 | 82.7 | 167.1 |

| Cyan | 72.3 | 13.9 to 130.8 | 116.9 | 283.5 |

| Green | 61.8 | 7.2 to 116.4 | 109.2 | 240.7 |

| Magenta | 45.7 | −8.9 to 100.3 | 109.2 | 60.7 |

| Red | 35.2 | −23.3 to 93.6 | 116.9 | 103.5 |

| Blue | 18.0 | −23.3 to 59.4 | 82.7 | 347.1 |

| Black | 7.5 | 0.0 | 0.0 | – |

Frame rate conversion

There is a large difference in frame rate between film, which runs at 24 frames per second, and the NTSC standard, which runs at approximately 29.97 (10 MHz×63/88/455/525) frames per second.

In regions that use 25-fps television and video standards, this difference can be overcome by speed-up.

For 30-fps standards, a process called "3:2 pulldown" is used. One film frame is transmitted for three video fields (lasting 1+1⁄2 video frames), and the next frame is transmitted for two video fields (lasting 1 video frame). Two film frames are thus transmitted in five video fields, for an average of 2+1⁄2 video fields per film frame. The average frame rate is thus 60 ÷ 2.5 = 24 frames per second, so the average film speed is nominally exactly what it should be. (In reality, over the course of an hour of real time, 215,827.2 video fields are displayed, representing 86,330.88 frames of film, while in an hour of true 24-fps film projection, exactly 86,400 frames are shown: thus, 29.97-fps NTSC transmission of 24-fps film runs at 99.92% of the film's normal speed.) Still-framing on playback can display a video frame with fields from two different film frames, so any difference between the frames will appear as a rapid back-and-forth flicker. There can also be noticeable jitter/"stutter" during slow camera pans (telecine judder).

Film shot specifically for NTSC television is usually taken at 30 (instead of 24) frames per second to avoid 3:2 pulldown.[46]

To show 25-fps material (such as European television series and some European movies) on NTSC equipment, every fifth frame is duplicated and then the resulting stream is interlaced.

Film shot for NTSC television at 24 frames per second has traditionally been accelerated by 1/24 (to about 104.17% of normal speed) for transmission in regions that use 25-fps television standards. This increase in picture speed has traditionally been accompanied by a similar increase in the pitch and tempo of the audio. More recently, frame-blending has been used to convert 24 FPS video to 25 FPS without altering its speed.

Film shot for television in regions that use 25-fps television standards can be handled in either of two ways:

- The film can be shot at 24 frames per second. In this case, when transmitted in its native region, the film may be accelerated to 25 fps according to the analog technique described above, or kept at 24 fps by the digital technique described above. When the same film is transmitted in regions that use a nominal 30-fps television standard, there is no noticeable change in speed, tempo, and pitch.

- The film can be shot at 25 frames per second. In this case, when transmitted in its native region, the film is shown at its normal speed, with no alteration of the accompanying soundtrack. When the same film is shown in regions that use a 30-fps nominal television standard, every fifth frame is duplicated, and there is still no noticeable change in speed, tempo, and pitch.

Because both film speeds have been used in 25-fps regions, viewers can face confusion about the true speed of video and audio, and the pitch of voices, sound effects, and musical performances, in television films from those regions. For example, they may wonder whether the Jeremy Brett series of Sherlock Holmes television films, made in the 1980s and early 1990s, was shot at 24 fps and then transmitted at an artificially fast speed in 25-fps regions, or whether it was shot at 25 fps natively and then slowed to 24 fps for NTSC exhibition.

These discrepancies exist not only in television broadcasts over the air and through cable, but also in the home-video market, on both tape and disc, including laser disc and DVD.

In digital television and video, which are replacing their analog predecessors, single standards that can accommodate a wider range of frame rates still show the limits of analog regional standards. The initial version of the ATSC standard, for example, allowed frame rates of 23.976, 24, 29.97, 30, 59.94, 60, 119.88 and 120 frames per second, but not 25 and 50. Modern ATSC allows 25 and 50 FPS.

Modulation for analog satellite transmission

Because satellite power is severely limited, analog video transmission through satellites differs from terrestrial TV transmission. AM is a linear modulation method, so a given demodulated signal-to-noise ratio (SNR) requires an equally high received RF SNR. The SNR of studio quality video is over 50 dB, so AM would require prohibitively high powers and/or large antennas.

Wideband FM is used instead to trade RF bandwidth for reduced power. Increasing the channel bandwidth from 6 to 36 MHz allows a RF SNR of only 10 dB or less. The wider noise bandwidth reduces this 40 dB power saving by 36 MHz / 6 MHz = 8 dB for a substantial net reduction of 32 dB.

Sound is on an FM subcarrier as in terrestrial transmission, but frequencies above 4.5 MHz are used to reduce aural/visual interference. 6.8, 5.8 and 6.2 MHz are commonly used. Stereo can be multiplex, discrete, or matrix and unrelated audio and data signals may be placed on additional subcarriers.

A triangular 60 Hz energy dispersal waveform is added to the composite baseband signal (video plus audio and data subcarriers) before modulation. This limits the satellite downlink power spectral density in case the video signal is lost. Otherwise the satellite might transmit all of its power on a single frequency, interfering with terrestrial microwave links in the same frequency band.

In half transponder mode, the frequency deviation of the composite baseband signal is reduced to 18 MHz to allow another signal in the other half of the 36 MHz transponder. This reduces the FM benefit somewhat, and the recovered SNRs are further reduced because the combined signal power must be "backed off" to avoid intermodulation distortion in the satellite transponder. A single FM signal is constant amplitude, so it can saturate a transponder without distortion.

Field order

An NTSC frame consists of two fields, F1 (field one) and F2 (field two). The field dominance depends on a combination of factors, including decisions by various equipment manufacturers as well as historical conventions. As a result, most professional equipment has the option to switch between a dominant upper or dominant lower field. It is not advisable to use the terms even or odd when speaking of fields, due to substantial ambiguity. For instance if the line numbering for a particular system starts at zero, while another system starts its line numbering at one. As such the same field could be even or odd.[26][47]

While an analog television set does not care about field dominance per se, field dominance is important when editing NTSC video. Incorrect interpretation of field order can cause a shuddering effect as moving objects jump forward and behind on each successive field.

This is of particular importance when interlaced NTSC is transcoded to a format with a different field dominance and vice versa. Field order is also important when transcoding progressive video to interlaced NTSC, as any place there is a cut between two scenes in the progressive video, there could be a flash field in the interlaced video if the field dominance is incorrect. The film telecine process where a three-two pull down is utilized to convert 24 frames to 30, will also provide unacceptable results if the field order is incorrect.

Because each field is temporally unique for material captured with an interlaced camera, converting interlaced to a digital progressive-frame medium is difficult, as each progressive frame will have artifacts of motion on every alternating line. This can be observed in PC-based video-playing utilities and is frequently solved simply by transcoding the video at half resolution and only using one of the two available fields.

Remove ads

NTSC-M

Unlike PAL and SECAM, with its many varied underlying broadcast television systems in use throughout the world, NTSC color encoding is almost invariably used with broadcast system M, giving NTSC-M.

NTSC-N and NTSC 50

NTSC-N was originally proposed in the 1960s to the CCIR as a 50 Hz broadcast method for System N countries Paraguay, Uruguay and Argentina before they chose PAL. In 1978, with the introduction of Apple II Europlus, it was effectively reintroduced as "NTSC 50", a pseudo-system combining 625-line video with 3.58 MHz NTSC color. For example, an Atari ST running PAL software on their NTSC color display used this system as the monitor could not decode PAL color. Most analog NTSC television sets and monitors with a V-Hold knob can display this system after adjusting the vertical hold.[48]

NTSC-J

Only Japan's variant "NTSC-J" is slightly different: in Japan, black level and blanking level of the signal are identical (at 0 IRE), as they are in PAL, while in American NTSC, black level is slightly higher (7.5 IRE) than blanking level. Since the difference is quite small, a slight turn of the brightness knob is all that is required to correctly show the "other" variant of NTSC on any set as it is supposed to be; most watchers might not even notice the difference in the first place. The channel encoding on NTSC-J differs slightly from NTSC-M. In particular, the Japanese VHF band runs from channels 1–12 (located on frequencies directly above the 76–90 MHz Japanese FM radio band) while the North American VHF TV band uses channels 2–13 (54–72 MHz, 76–88 MHz and 174–216 MHz) with 88–108 MHz allocated to FM radio broadcasting. Japan's UHF TV channels are therefore numbered from 13 up and not 14 up, but otherwise uses the same UHF broadcasting frequencies as those in North America.

NTSC 4.43

NTSC 4.43 is a pseudo-system that transmits a NTSC color subcarrier of 4.43 MHz instead of 3.58 MHz[49] The resulting output is only viewable by TVs that support the resulting pseudo-system (such as most PAL TVs).[50] Using a native NTSC TV to decode the signal yields no color, while using an incompatible PAL TV to decode the system yields erratic colors (observed to be lacking red and flickering randomly). The format was used by the USAF TV based in Germany during the Cold War and Hong Kong Cable Television.[citation needed] It was also found as an optional output on some LaserDisc players sold in markets where the PAL system is used.

The NTSC 4.43 system, while not a broadcast format, appears most often as a playback function of PAL cassette format VCRs, beginning with the Sony 3/4" U-Matic format and then following onto Betamax and VHS format machines, commonly advertised as "NTSC playback on PAL TV".

Multi-standard video monitors were already in use in Europe to accommodate broadcast sources in PAL, SECAM, and NTSC video formats. The heterodyne color-under process of U-Matic, Betamax & VHS lent itself to minor modification of VCR players to accommodate NTSC format cassettes. The color-under format of VHS uses a 629 kHz subcarrier while U-Matic & Betamax use a 688 kHz subcarrier to carry an amplitude modulated chroma signal for both NTSC and PAL formats. Since the VCR was ready to play the color portion of the NTSC recording using PAL color mode, the PAL scanner and capstan speeds had to be adjusted from PAL's 50 Hz field rate to NTSC's 59.94 Hz field rate, and faster linear tape speed.

The changes to the PAL VCR are minor thanks to the existing VCR recording formats. The output of the VCR when playing an NTSC cassette in NTSC 4.43 mode is 525 lines/29.97 frames per second with PAL compatible heterodyned color. The multi-standard receiver is already set to support the NTSC H & V frequencies; it just needs to do so while receiving PAL color.

The existence of those multi-standard receivers was probably part of the drive for region coding of DVDs. As the color signals are component on disc for all display formats, almost no changes would be required for PAL DVD players to play NTSC (525/29.97) discs as long as the display was frame-rate compatible.

OSKM (USSR-NTSC)

In January 1960, (7 years prior to adoption of the modified SECAM version) the experimental TV studio in Moscow started broadcasting using the OSKM system. OSKM was the version of NTSC adapted to European D/K 625/50 standard. The OSKM abbreviation means "Simultaneous system with quadrature modulation" (In Russian: Одновременная Система с Квадратурной Модуляцией). It used the color coding scheme that was later used in PAL (U and V instead of I and Q).

The color subcarrier frequency was 4.4296875 MHz and the bandwidth of U and V signals was near 1.5 MHz.[51] Only circa 4000 TV sets of 4 models (Raduga,[52] Temp-22, Izumrud-201 and Izumrud-203[53]) were produced for studying the real quality of TV reception. These TV's were not commercially available, despite being included in the goods catalog for trade network of the USSR.

The broadcasting with this system lasted about 3 years and was ceased well before SECAM transmissions started in the USSR. None of the current multi-standard TV receivers can support this TV system.

NTSC-film

This section needs expansion. You can help by adding to it. (June 2008) |

Film content commonly shot at 24 frames/s can be converted to 30 frames/s through the telecine process to duplicate frames as needed.

Mathematically for NTSC this is relatively simple as it is only needed to duplicate every fourth frame. Various techniques are employed. NTSC with an actual frame rate of 24⁄1.001 (approximately 23.976) frames/s is often defined as NTSC-film. A process known as pullup, also known as pulldown, generates the duplicated frames upon playback. This method is common for H.262/MPEG-2 Part 2 digital video so the original content is preserved and played back on equipment that can display it or can be converted for equipment that cannot.

Remove ads

This section needs additional citations for verification. (March 2020) |

For NTSC, and to a lesser extent, PAL, reception problems can degrade the color accuracy of the picture where ghosting can dynamically change the phase of the color burst with picture content, thus altering the color balance of the signal. The only receiver compensation is in the professional TV receiver ghost canceling circuits used by cable companies. The vacuum-tube electronics used in televisions through the 1960s led to various technical problems. Among other things, the color burst phase would often drift. In addition, the TV studios did not always transmit properly, leading to hue changes when channels were changed, which is why NTSC televisions were equipped with a tint control. PAL and SECAM televisions had less of a need for one. SECAM in particular was very robust, but PAL, while excellent in maintaining skin tones which viewers are particularly sensitive to, nevertheless would distort other colors in the face of phase errors. With phase errors, only "Deluxe PAL" receivers would get rid of "Hanover bars" distortion. Hue controls are still found on NTSC TVs, but color drifting generally ceased to be a problem for more modern circuitry by the 1970s. When compared to PAL, in particular, NTSC color accuracy and consistency were sometimes considered inferior, leading to video professionals and television engineers jokingly referring to NTSC as Never The Same Color, Never Twice the Same Color, or No True Skin Colors,[54] while for the more expensive PAL system it was necessary to Pay for Additional Luxury.[citation needed]

The use of NTSC coded color in S-Video systems, as well as the use of closed-circuit composite NTSC, both eliminate the phase distortions because there is no reception ghosting in a closed-circuit system to smear the color burst. For VHS videotape on the horizontal axis and frame rate of the three color systems when used with this scheme, the use of S-Video gives the higher resolution picture quality on monitors and TVs without a high-quality motion-compensated comb filtering section. (The NTSC resolution on the vertical axis is lower than the European standards, 525 lines against 625.) However, it uses too much bandwidth for over-the-air transmission. The Atari 800 and Commodore 64 home computers generate S-video, but only when used with specially designed monitors as no TV at the time supported the separate chroma and luma on standard RCA jacks. In 1987, a standardized four-pin mini-DIN socket was introduced for S-video input with the introduction of S-VHS players, which were the first device produced to use the four-pin plugs. However, S-VHS never became very popular. Video game consoles in the 1990s began offering S-video output as well.

Remove ads

The standard NTSC video image contains some lines (lines 1–21 of each field) that are not visible (this is known as the Vertical Blanking Interval, or VBI); all are beyond the edge of the viewable image, but only lines 1–9 are used for the vertical-sync and equalizing pulses. The remaining lines were deliberately blanked in the original NTSC specification to provide time for the electron beam in CRT screens to return to the top of the display.

VIR (or Vertical interval reference), widely adopted in the 1980s, attempts to correct some of the color problems with NTSC video by adding studio-inserted reference data for luminance and chrominance levels on line 19.[55] Suitably equipped television sets could then employ these data in order to adjust the display to a closer match of the original studio image. The actual VIR signal contains three sections, the first having 70 percent luminance and the same chrominance as the color burst signal, and the other two having 50 percent and 7.5 percent luminance respectively.[56]

A less-used successor to VIR, GCR, also added ghost (multipath interference) removal capabilities.

The remaining vertical blanking interval lines are typically used for datacasting or ancillary data such as video editing timestamps (vertical interval timecodes or SMPTE timecodes on lines 12–14[57][58]), test data on lines 17–18, a network source code on line 20 and closed captioning, XDS, and V-chip data on line 21. Early teletext applications also used vertical blanking interval lines 14–18 and 20, but teletext over NTSC was never widely adopted by viewers.[59]

Many stations transmit TV Guide On Screen (TVGOS) data for an electronic program guide on VBI lines. The primary station in a market will broadcast 4 lines of data, and backup stations will broadcast 1 line. In most markets the PBS station is the primary host. TVGOS data can occupy any line from 10–25, but in practice its limited to 11–18, 20 and line 22. Line 22 is only used for 2 broadcast, DirecTV and CFPL-TV.

TiVo data is also transmitted on some commercials and program advertisements so that customers can autorecord the program being advertised, and is also used in weekly half-hour paid programs on Ion Television and the Discovery Channel which highlight TiVo promotions and advertisers.

Remove ads

Parts of this article (those related to individual sections) need to be updated. (December 2014) |

Below are countries and territories that currently use or once used the NTSC system. Many of these have switched or are currently switching from NTSC to digital television standards such as ATSC (United States, Canada, Mexico, Suriname, Jamaica, South Korea, Saint Lucia, Bahamas, Barbados, Grenada, Antigua and Barbuda, Haiti), ISDB (Japan, Philippines, part of South America and Saint Kitts and Nevis), DVB-T (Taiwan, Panama, Colombia, Myanmar, and Trinidad and Tobago) or DTMB (Cuba).

American Samoa[60]

Anguilla[60]

Antigua and Barbuda[60]

Aruba[60]

Bahamas[60]

Barbados[60]

Belize[60]

Bermuda[60] (Over-the-air NTSC broadcasts (Channel 9) have been terminated as of March 2016, local broadcast stations have now switched to digital channels 20.1 and 20.2)[61]

Bolivia[60]

Bonaire[60]

British Virgin Islands[60]

Canada[60] (Over-the-air NTSC broadcasting in major cities ceased August 2011 as a result of legislative fiat, to be replaced with ATSC. Some one-station markets or markets served only by full-power repeaters remain analog.[62])

Caribbean Netherlands[60]

Cayman Islands[60]

Chile[60] (Analog shutoff occurred in 2024,[63] now switching to ISDB-Tb)

Colombia[60] (Analog shutoff scheduled to 2022, simulcasting DVB-T)

Costa Rica[60] (NTSC broadcast to be abandoned by December 2018, simulcasting ISDB-Tb)

Cuba[60]

Curaçao[60]

Dominica[60]

Dominican Republic[60] (Over-the-air NTSC broadcasting scheduled to be abandoned by 2021, simulcast in ATSC)[64]

Ecuador[60]

El Salvador (Over-the-air NTSC broadcasting scheduled to be abandoned by December 2024, simulcast in ISDB-Tb)[65]

Grenada[60]

Guam[60]

Guatemala[60]

Guyana[60]

Haiti[60]

Honduras[60] (Over-the-air NTSC broadcasting scheduled to be abandoned by December 2020, simulcast in ISDB-Tb)

Jamaica[60] (Will convert to ATSC 3.0 instead of 1.0. The conversion will begin in 2022 and is expected to be completed by 2023)[66]

Japan[60] (fully switched to ISDB in 2012, after the 2011 Tōhoku earthquake and tsunami delayed the planned 2011 rollout in three prefectures)

Marshall Islands[60] (in Compact of Free Association with US; US aid funded NTSC adoption)

Mexico plans to transition from NTSC announced on July 2, 2004,[67] started conversion in 2013[68] full transition was scheduled on December 31, 2015,[69] but due to technical and economic issues for some transmitters — the full transition was extended to be completed on December 31, 2016.

Micronesia[60] (in Compact of Free Association with US, transitioning to DVB-T)

Midway Atoll (a US military base)

Montserrat[60]

Myanmar (also used PAL)

Nicaragua[60]

Northern Mariana Islands

Palau[60] (in Compact of Free Association with US; adopted NTSC before independence)

Panama[60] (NTSC broadcasts to be abandoned by 2020, simulcasting DVB-T. NTSC broadcasts to be abandoned in areas with more than 90% of DVB-T reception)

Peru[60] (NTSC broadcast to be abandoned by December 31, 2017, simulcasting ISDB-Tb)[70]

Philippines[60] (NTSC broadcast was intended to be abandoned at the end of 2015. However, in later 2014 — it was postponed to 2019,[71] and later extended to 2023.[72][73][74][75][76] All analog broadcasts are expected to be shut off by the end of 2024 or by 2026.[77][78][79][80] It will simulcast in ISDB-T.)

Puerto Rico[60] (now uses ATSC)

Saint Kitts and Nevis[60]

Saint Lucia[60] (existed on NTSC since March 5, 2024)

Saint Vincent and the Grenadines[60]

Saudi Arabia (simulcast in NTSC, SECAM and PAL, before switching to PAL in the early 1990s)

Sint Maarten[60] (also used 8 MHz spacing of DVB-T2 (same bandwidth spacing in European Netherlands) on Encrypted Terrestrial Digital TV subscription via WTN CABLE)

South Korea

Suriname[60]

Trinidad and Tobago[60] (Will convert to ATSC 3.0 instead of 1.0. The conversion will begin in 2023 and is expected to be completed by 2026)[81]

Turks and Caicos Islands[60]

United States[60] (Full-power over-the-air NTSC broadcasting was switched off on June 12, 2009[82][83] in favor of ATSC. Low-power stations, Class A stations were switched off on September 1, 2015. Translators and other Low-power stations were supposed to transition on the same day Class-A stations shut off analog services but it was postponed to July 13, 2021, due to a spectrum auction.[84] Most remaining analog cable television systems are also unaffected)[85]

United States Virgin Islands

Venezuela[60]

Experimented

Brazil (Between 1962 and 1963, Rede Tupi and Rede Excelsior made the first unofficial transmissions in color, in specific programs in the city of São Paulo, before the official adoption of PAL-M by the Brazilian Government on February 19, 1972)

Paraguay

United Kingdom (Experimented on 405-line variant of NTSC, then UK chose 625-line for PAL broadcasting.)

Countries and territories that have ceased using NTSC

The following countries and regions no longer use NTSC for terrestrial broadcasts.

| Country | Switched to | Switchover completed |

|---|---|---|

| DVB-T | March 1, 2016 | |

| ATSC | August 31, 2011 (Select markets) | |

| ISDB-Tb | April 9, 2024[86] | |

| ISDB-Tb | August 15, 2019 | |

| ISDB-Tb | December 31, 2019[87] | |

| ISDB-T | March 31, 2012 | |

| ATSC | December 31, 2015 (Full power stations)[88] | |

| ATSC | March 5, 2024 | |

| ATSC | December 31, 2012 | |

| ATSC | 2015[89] | |

| DVB-T | June 30, 2012 | |

| ATSC | June 12, 2009 (Full power stations)[83] September 1, 2015 (Class-A stations) July 13, 2021 (Low power stations)[90] |

Remove ads

- ATSC, the successor committee to NTSC that deals with digital television broadcast standards

- Broadcast-safe

- Composite artifact colors

- Glossary of video terms

- List of common resolutions § Television and media

- List of video connectors

- NTSC-C

- Television channel frequencies

Wikiwand in your browser!

Seamless Wikipedia browsing. On steroids.

Every time you click a link to Wikipedia, Wiktionary or Wikiquote in your browser's search results, it will show the modern Wikiwand interface.

Wikiwand extension is a five stars, simple, with minimum permission required to keep your browsing private, safe and transparent.

Remove ads